I was listening to Reason's Just Asking Questions podcast episode about the Department of Government Efficiency (DOGE), and I thought host Liz Wolf was too willing to accept Elon Musk and DOGE at face value. I'd noticed a similar attitude at the Reason Roundtable last week. I get where they're coming from. After years watching people suffer at the hands of government employees, … [Read more...] about Talking to my fellow libertarians about DOGE

Late night thoughts on the current crisis

I've been watching what's happening in the U.S. government with growing dismay. Trump and Musk seem determined to destroy the government's ability to perform certain functions, some of which are very important to the United States, and some of which are very important to the world. And it turns out that many of the safeguards against this destruction are controlled by the … [Read more...] about Late night thoughts on the current crisis

Joining The Cult

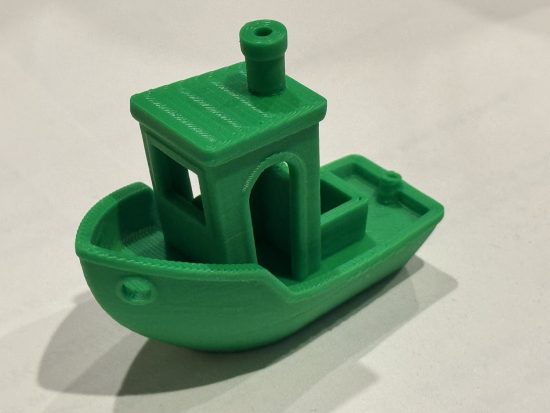

I've been curious about 3D printing for years. It's cool technology that looks like fun to play with. The problem was that I didn't have many ideas about what sorts of things to make with a printer. I'd feel pretty silly if I spent hundreds of dollars on a printer and then didn't use it for anything after the first week. For that reason, I could never justify the cost enough to … [Read more...] about Joining The Cult

Trump’s dumb attempt to define sex

(When I started to write this post, I thought it would be a neat little think piece about science, policy, and meaning. But given how much more is going on, it now fells a bit academic. Nevertheless, I took the time to write it, so I might as well hit the Publish button...)Trump's anti-transgender executive order((The one from last week, not the new ones from this week. … [Read more...] about Trump’s dumb attempt to define sex

Some advice for my transgender readers in the new year

There has been a surge of anti-trans violence in the last few years, including attacks leading to at least 36 deaths, and given the direction our country is going, it would not be surprising to see even more violence in the future. Given that possibility, I have some advice for my transgender readers--Wait, what? A middle-aged white cishet male has advice for trans … [Read more...] about Some advice for my transgender readers in the new year