[Update: This was one of my think pieces, where I'm having trouble understanding something and try to work it out in writing. In retrospect, I think I go down the wrong path when discussing the murder charge. Sorry.]So Amber Guyger was found guilty of murder, and I'm still trying to make sense of it, but think I'm probably okay with it. A Dallas County jury on Tuesday … [Read more...] about Thinking About Amber Guyger and the Castle Doctrine

Crime and Punishment

Model Justice — Starting Over

It's been a while since I wrote about my Model Justice project, which is an attempt to build a software model of certain aspects of the criminal justice system. In my last post on the subject, I explained why I was abandoning the Insight Maker systems modeling environment in favor of the Python general-purpose programming language. Since then, I've been slowly … [Read more...] about Model Justice — Starting Over

Model Justice — Stalled On the Learning Curve

The "Model Justice" series of posts (starting here) is chronicling my attempts to build a software model of certain aspects of the criminal justice system. As I explained in the most recent post, my first attempt at building a model ran into a dead end, so now I'm figuring out what to do for my next attempt.I've identified several possible ways the model could be improved, … [Read more...] about Model Justice — Stalled On the Learning Curve

Model Justice – Plea Bargaining Blues

As I explained in the first post of this series, I'm trying to build a computer model of certain aspects of the criminal justice system. This week, it's not going well.My goal is to build a model of how our justice system handles plea bargains, with an eye toward understanding (1) why a system built around the highly formalized decision-making mechanism of the trial doesn't … [Read more...] about Model Justice – Plea Bargaining Blues

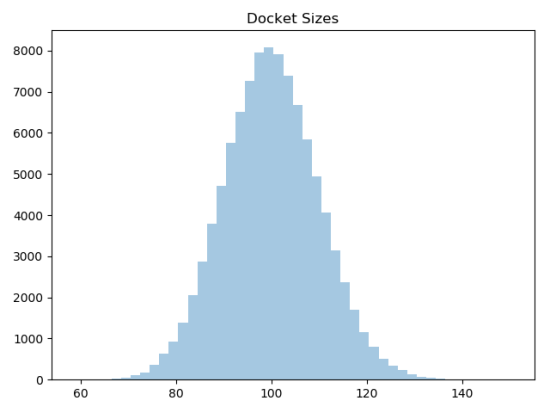

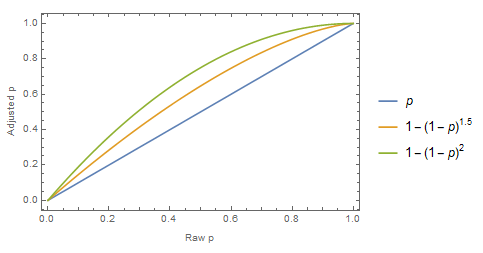

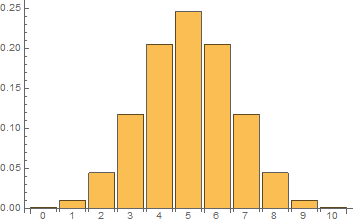

Model Justice 1.1 – Random Guilt

One of the limitations of the Model Justice 1.0 model is that every defendant has the exact same probability of being found guilty at trial. That's unrealistic, and it's unrealistic in a way that will matter: The estimation of the probability of a conviction at trial has got to be one of the most important factors in whether a prosecutor decides to offer a plea deal and whether … [Read more...] about Model Justice 1.1 – Random Guilt